At the beginning of the year, I like to take a step back to separate lasting signals from passing fads. The past months made one thing unmistakably clear: we are entering an age of realism in all things AI and data, allowing us to move past the hype and into the (sometimes messy) phase of real work and real value. And I see this happening in several domains:

- AI will be treated as a strategic business discipline, not a playground.

- Sovereignty will remain important, but with a more balanced approach.

- Focus will shift from agentic AI hype to the realities of managing these systems.

- Collaboration, especially around data, will become more pragmatic and essential.

- Regulation will be approached in a more measured, realistic way.

Read on to find out what that exactly means for your company, and how Athumi can help you with that:

1 - AI Realism

It’s becoming increasingly clear that AI’s honeymoon period is over. For a while, the conversation focused almost exclusively on its potential (which is undeniably huge) without much scrutiny of real-world revenue, tangible applications, or measurable productivity gains. A recent MIT Media Lab report offered one of the most striking illustrations of this gap: no less than 95% of investments in generative AI have produced zero returns.

The AI Experimentation Trap

Social media erupted with scepticism, but the deeper issue was the “AI Experimentation Trap”: where organizations had been launching scattered pits and proof-of-concepts, often driven by hype, FOMO, or simple curiosity, without grounding them in real business value or customer problems. The problem isn’t AI itself, though, it’s how it’s being used. AI is not a magic wand. It amplifies a company’s culture and strategy, and if those foundations aren’t sound, no amount of AI will create meaningful impact.

Pieter-Jan De Man, Director at STAS and Squadron, expressed exactly this point in our NextTech podcast conversation on digitization and automation in manufacturing. The technology is rarely the bottleneck, he explained. The real challenge is strategic insight: many companies still lack a clear understanding of how and why they should digitize. Without that clarity, any solution they implement will inevitably fall short.

Work slop

Another revealing insight came from a new report by Stanford University and BetterUp Labs: 40% of 1,150 U.S. employees said they had received “work slop” (AI-generated, low-quality content) from colleagues in the past month. Employees estimated that about 15% of the work they receive is work slop, and that each instance costs them nearly 2 hours to clean up.

That insight, that we need to learn to use AI better, is another sign that we are finally moving from hype to reality. And that shift is actually a good thing. This is the moment when things get interesting, when the noise fades and real products, services, and breakthroughs emerge. AI’s potential to accelerate progress in science, healthcare, and energy is staggering. Take healthcare, for example:

- Both OpenAI and Anthropic are exploring and launching health tools, from personal health assistants to platforms that unify medical records.

- NASA and Google have developed an AI medical assistant trained to diagnose and treat astronauts when no doctor is available, technology that could also serve remote or underserved regions.

- Microsoft recently introduced the MAI Diagnostic Orchestrator, an AI system that diagnoses complex cases nearly four times more accurately than experienced physicians.

AI tools are only as strong as the data that feeds them. High-quality, accessible, interoperable data is the real engine behind reliable insights and outcomes. This is why data ecosystems, where diverse players across sectors securely share information, are so critical. The biggest obstacles in building them are trust and compliance, especially when competitors are involved. That’s where neutral, EU-certified data intermediaries like Athumi can play a crucial role, enabling secure and compliant data collaboration while protecting the interests of all participants.

2 - Sovereign Balance

More than ever and especially since the geopolitical landscape has grown even more volatile with the arrival of President Trump, digital sovereignty has become one of the defining challenges of the global AI race.

Nexperia Drama

The Nexperia saga of last year laid bare the fragility of Europe’s semiconductor supply chain and the absence of any coherent strategy to defend it. In September 2025, the Dutch government placed the China-owned chipmaker under state control, citing fears of intellectual-property leakage, asset stripping, and threats to chip security. Using emergency powers, it froze all major corporate decisions. Beijing denounced the move as protectionist and retaliated by restricting exports of Nexperia-made chips, triggering an immediate shock across the global automotive industry and exposing, rather than mitigating Europe’s vulnerability.

By mid-November, as diplomatic pressure mounted and industrial disruption intensified, The Hague quietly suspended its takeover order and Nexperia resumed normal operations. China soon relaxed its export controls. But the damage was done. The episode underscored a sobering reality: in today’s geopolitically charged economy, Europe’s technological sovereignty rests on far shakier ground than policymakers are willing to acknowledge.

Full Decoupling Is An Illusion

So yes, technological sovereignty matters and initiatives like the Eurostack movement or OpenChip’s ambition to build a European alternative to Nvidia deserve strong support. But, in parallel with my first trend piece, we also need realism. The technological supply chain, whether it concerns chips, infrastructure, data centers or manufacturing, is extraordinarily complex. Fully decoupling it across continents would be not only expensive, but strategically counterproductive. As a firm believer in collaboration, I fear that hard decoupling would leave every region weaker, less innovative, and more isolated.

What we need instead is balance: a world where each continent owns a segment of the supply chain that is genuinely irreplaceable. That kind of mutual interdependence prevents dominant players from coercing others by withholding raw materials, technology, or infrastructure. For Europe, this means becoming indispensable in specific areas. We already have champions like imec, ASML and Mistral, but we will need more if we want to match the strategic depth of the U.S. or China.

One of the strongest paths to that irreplaceability is collaboration through data sharing. Europe already has key enablers in place:

- Regulations like the Data Act, which stimulates secure data exchange

- High-quality, diverse datasets that can fuel new business models

- Neutral data intermediaries such as Athumi that can build trustworthy data ecosystems

- And a deep pool of local talent

Openness As A Strategic Advantage

Another major competitive advantage in the AI race is our openness. China has already demonstrated that open-sourcing large language models leads to faster, cheaper, more innovative progress. Low-cost open source or open weight models like DeepSeek, Kimi K2 Thinking, and Emu 3.5 have surpassed many U.S. competitors in benchmarks.

In Europe, we are moving in the same direction. A growing alliance of more than 20 leading research institutes, companies, and HPC centres is for instance working on the OpenEuroLLM project, which aims to develop next-generation open-source multilingual LLMs for commercial, industrial, and public use. Mistral, one of Europe’s most prominent AI companies, is also advancing the frontier with its high-performance open-weight models.

Data ecosystems too embody the openness that will strengthen Europe’s sovereign position. They are the clearest proof that collaboration, not isolation, is our most powerful strategic edge, especially in this world that is geopolitically more divided than ever.

3 - Active AI

One of the most promising fields of technology has everything to do with what I like to call “Active AI”: AI systems that can act, both online as AI Agents and in the real world, via robotic hardware combined with Physical AI or Large World Models.

When it comes to robot trends, for instance, I am very intrigued by how companies are providing them with the necessary training. Sometimes that's in real life factories like Chinese AgiBot in a production line from Longcheer Technology. Often, it's in more contained environments, too. Like the glass-walled lab where humans act out the basic motions of everyday life to train for instance Tesla’s Optimus robot. GrayMatter Robotics even opened a 100,000-square-foot “Physical AI Innovation Center” in California, where over 25 robot cells sand, buff, and spray real customer parts. China is also sending its humanoid robots to “boot camp”: massive robotics training hubs spread across cities nationwide. In these facilities, robots are pushed through a wide range of real-world scenarios, generating vast amounts of training data that manufacturers use to rapidly accelerate development and deployment.

A Teleoperated robot in your house

1X robotics has perhaps the most controversial approach: in a first phase (at the end of 2026) its NEO household robot will primarily be operated via remote workers. The reason is of course that it can capture real-life data this way, which is a lot more valuable and messy than that of controlled lab environments. To put it in the words of its CEO Bernt Børnich: “If we don’t have your data, we can’t make the product better”. On top of teleoperations, the machine will be gathering data from houses to train its AI software, so that it’ll be able to perform tasks autonomously in the future.

The growing autonomy of our systems, be it AI Agents or embodied intelligence like robots or self-driving cars, will always come at a cost of privacy, and security, though. Because to both learn effectively and act appropriately, they must be continuously supplied with live, sensitive and even real-world (in the case of robots) data. That is why protecting the privacy of consumers and citizens has never been more important, especially in ways that do not hinder the positive evolution of our technological systems.

This is precisely why we at Athumi place such strong belief in the concept of data pods: a model in which the people who generate data remain its controllers, with the explicit power to decide whether and how their data is shared. This principle is just as critical for robots collecting real-world as it is for AI agents, which will increasingly be able to control our browsers and access highly sensitive information such as email and financial accounts.

Practical Management

Another compelling sub-theme here is that, although the field of “Active AI” is still highly emergent, companies are already thinking well beyond the core technology and toward its interaction with surrounding systems such as browsers, operating systems, and payment processes.

Take protocols, for example. Google recently announced the Agents Payment Protocol (AP2), an open standard designed to allow AI agents to carry out transactions securely on behalf of users. Microsoft, meanwhile, is focusing on agent management. Its Agent 365 is built to help organizations govern a growing ecosystem of AI agents by maintaining a comprehensive registry, including so-called “shadow agents” created independently by employees. Microsoft has also repeatedly floated the idea of turning Windows into an agentic OS, though this has triggered reservations from many corners of the industry.

Interoperability

For agents to collaborate effectively across platforms, interoperability standards will also be essential. To that end, Anthropic, OpenAI, Google, Microsoft, Amazon, and others have announced the Agentic Artificial Intelligence Foundation, an initiative aimed at developing open-source standards for AI agents, much like the global standards that enable seamless interbank electronic payments today.

At the same time, the web itself is shifting toward a “Zero-Click Internet,” where agents increasingly aggregate and act on information without routing users through traditional search results. In response, tech companies are racing to build agent-native browsers of their own, like OpenAI’s Atlas or Perplexity’s Comet, to gain direct access to the user-activity data required to train more capable autonomous systems.

New Security Threats

Security, too, becomes a central concern in this new paradigm. AI agents, for instance, are uniquely susceptible to prompt-injection attacks, where hidden instructions embedded in web pages or images can manipulate an agent into taking unauthorized actions like emailing bank details or extracting private data without user consent. By safely containing personal information, data pods like the ones we are using at Athumi, can significantly reduce the attack surface and make such exploits far harder to execute.

We are clearly entering a realist phase of “Active Tech” with AI agents and robots, where experimentation is inevitable and progress is messy. This is the period in which companies begin to uncover the hidden challenges that only surface once technology leaves the lab and collides with the complexity of the real world.

4 - Social AI

“Parasocial” was named Cambridge Dictionary’s Word of the Year for 2025, a telling signal of the times. The term captures the rise of one-sided relationships, and the illusion of intimacy people increasingly feel toward celebrities, influencers, and … artificial systems. In the midst of a growing loneliness epidemic, it’s becoming clear that people are craving connection and are seeking it in places that would have seemed unlikely just a few years ago.

A Social Presence

Not long ago, AI was largely viewed as an information tool: something you queried, not something you related to. Today, it is increasingly being positioned and perceived as a social presence. In the United States, for example, 72% of teens say they have interacted with an AI companion at least once. More than half (52%) qualify as regular users, engaging with AI companions multiple times per month, while 13% report daily use. In another recent survey, one in five high school students said they had been in a romantic relationship with an AI or knew someone who had.

Even ChatGPT, which was never designed as a social platform, appears to be drifting in this direction. Research suggests that while most users do not initially engage with it on an emotional level, those who interact with it for extended periods often begin to describe it as a “friend.”

A New Form Of Companionship

Taken together, these trends point to a deeper shift: as human connection becomes harder to find, technology is increasingly stepping in, not just as a tool, but as a stand-in for companionship itself.

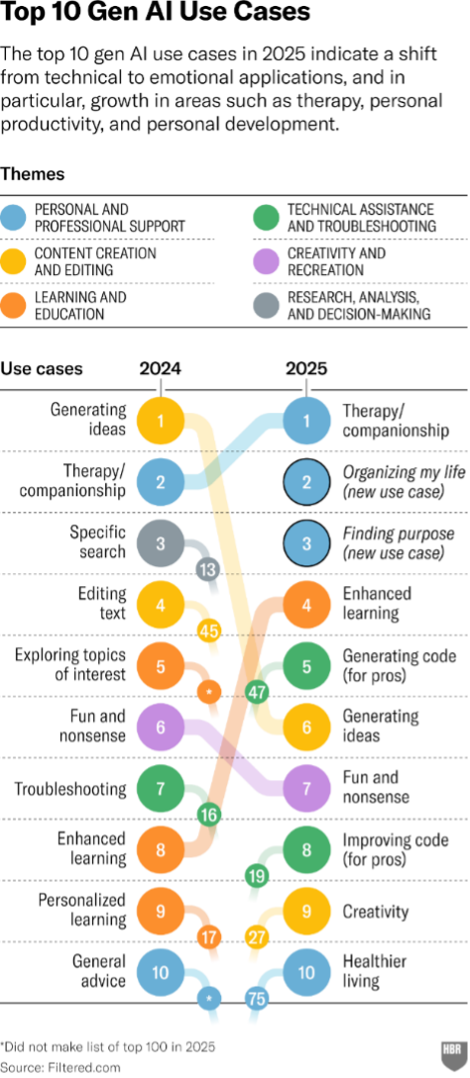

In fact, by 2025, “therapy and companionship” overtook “idea generation” as the number one use case for generative AI, a striking indicator of how people’s relationship with these systems is evolving. Usage patterns also differ sharply by generation. Sam Altman has noted that older users tend to treat ChatGPT as a replacement for Google; people in their 20s and 30s increasingly use it as a life advisor; while college students, perhaps most revealingly, see it as an “operating system” for daily life.

The Side Effects

The social use of AI, however, is producing some genuinely unexpected, sometimes unsettling, side effects. Wired has reported that as romantic relationships with chatbots become more common and begin to strain real-world partnerships, a new legal gray zone is emerging in family law: in some cases, an “AI affair” is now being cited as grounds for divorce.

At the same time, treating AI as quasi-human and turning to it for coaching, guidance, or emotional support has also unlocked legitimate and promising applications. Researchers at Dartmouth, for example, studied 106 participants using TheraBot, an on-demand chatbot trained on therapist-authored transcripts of hypothetical patient–therapist conversations. Among users with diagnosed mental health conditions, the results were notable: depression symptoms dropped by 51%, anxiety symptoms by 31%, and eating-disorder symptoms by 19% over the course of the trial.

The double-edged nature of AI

Together, these developments highlight the double-edged nature of social AI: capable of both (too) deep emotional entanglement and meaningful therapeutic benefit, often at the same time.

However useful they can be, at the end of the day it's important to realize that:

- These systems are operated by commercial companies who receive access to highly private and sensitive data this way. They can use this to train their systems, sell ads (if that's part of their business model) or even make deliberate design choices to maximize user engagement, a trend researchers call "addictive intelligence".

- These are unstable systems that hallucinate and also have sycophantic tendencies (they tend to flattery and agree with the user's worldview rather than challenging it) that can be extremely nefarious for people with mental health issues. We've seen quite some instances of "AI psychosis" in 2025, which has been linked to severe breaks from reality, the breakdown of families, and even suicides, leading to wrongful death lawsuits against OpenAI and Character.AI

As with all things AI, there are interesting opportunities in this specific domain, but we must also tread carefully. Social AI should never be a full replacement for human relationships. And the systems should be regulated so that they do not harm the wellbeing of their interlocutors, especially children and teenagers.

Above all, we need to have a discussion about how these systems will use this highly personal data, and find ways - like with data pods that offer control to data creators over the traces that they leave - to protect users and their data, while at the same time opening it up for the good of humanity.

5 - Collaboration Is No Longer Optional

If the past months have proven anything, it is that this is no longer the age of the lone genius CEO or company. The world has become so complex that collaboration has become inevitable, even among the biggest tech giants out there.

A Staggering Amount Of Collaborations

Project Stargate is a perfect example of that as the lack of infrastructure is probably one of the biggest challenges of driving growth in the AI industry. So OpenAI, Oracle, SoftBank, Nvidia, Microsoft and Abu Dhabi investment firm MGX planned to build massive, purpose-built data centers optimized for training and running advanced AI models, with total investment estimates reaching hundreds of billions of dollars over the next decade.

The amount of collaborations and (financial) partnerships was pretty staggering over the past months. Just to give some examples:

- Acquisitions and Acquihires: OpenAI acquired Jony Ive’s secretive AI hardware startup io, Meta invested $15 billion to acquire a 49% stake in data-labelling startup Scale AI and Nvidia conducted a $20 billion acquihire of Groq.

- Multi-Billion Dollar Investments: Nvidia has participated in nearly 67 venture capital deals in 2025 among which Anthropic, xAI Thinking Machines Lab, Figure AI and many more, while ASML invested €1.3 billion for an 11% stake in the French startup Mistral AI

- Strategic Partnerships: Meta is collaborating with defense-tech startup Anduril to build the "EagleEye" AR+AI helmet for the US military and Apple picked Google’s Gemini to run AI-powered Siri.

- Infrastructure and Utilities: OpenAI and Oracle signed a $300 billion deal for cloud infrastructure and compute and Nvidia and Intel agreed to a $5 billion collaboration to co-develop data centre and PC chips.

Two Sides

As with most technological shifts, there are two sides to this coin.

First, large technology companies often favour collaborations, partnerships, or acquisitions because these routes are typically faster, smarter, and more cost-efficient than building everything in-house. By pooling capabilities, whether infrastructure, talent, or distribution, participants strengthen each another and accelerate innovation across the ecosystem.

Second, there is also a more uncomfortable counterargument. Concerns are growing about a potential bubble driven by increasingly circular investment dynamics. Major players such as Microsoft, Google, Amazon, and Nvidia are financing AI startups like OpenAI, Anthropic, or xAI not only with cash, but also with cloud credits, compute, and specialized hardware. So much of that capital flows straight back upstream, as startups spend heavily on cloud services from Microsoft, Google, or Amazon, or on GPUs and AI accelerators from Nvidia.

Money stays in a closed loop instead of reaching the broader economy. That can boost valuations in the short term, but it also masks real demand and creates hidden weaknesses.

Still, this dynamic does not necessarily signal failure. As economist Carlota Perez has shown, speculative bubbles are often a standard phase in technological revolutions. When they burst, the industry is not doomed; instead, it transitions into a more mature, productive phase, one where the underlying technology becomes more deeply embedded in the real economy.

Bubble or not, I strongly believe in the power of collaboration, especially in an increasingly complex world where it is no longer technically or financially feasible for one company to master the necessary part of its market on its very own. Power comes in numbers, especially when it comes to information and data. Which is why I’m so proud to be leading Athumi, that helps companies unite their power in intelligent data ecosystems so they can better serve their customers or citizens.

6 – Data And AI Regulation In Flux

Regulation is an essential part of foundational systems like AI models. They are simply too powerful to be left to their own devices. Unsurprisingly, we see big differences in regulation approach and even evolutionary shifts between the vision of the US, the EU and China.

US: Aggressive Deregulation

It does not really come as a surprise that President Trump and his administration have been pushing aggressive deregulation and centralization, shifting towards a "build first, ask questions later" approach. They clearly prioritize global dominance over safety-focused restrictions.

Last year, the US House passed legislation to ban independent state-level AI regulations for ten years. The claim is that this prevents a "patchwork" of rules that could stifle innovation and allow rivals like China to gain a lead. Of course, the fact that this also gives more control and power in the hands of the president and his team is probably not a mere coincidence. The Trump administration also revoked a Biden-era executive order that required AI developers to share safety test results with the government, which signaled a move away from federal oversight of model releases.

President Trump's own AI order, then, is very much ideologically oriented and contained some big statements, aiming to develop AI systems “free from ideological bias or engineered social agendas.”

China: Protectionism

China, in its turn, takes the approach of protectionism and social engineering, pushed by an aggressive drive for technological sovereignty. It has for instance introduced a new mandate requiring chip fabs to use at least 50 % domestically produced semiconductor manufacturing equipment when expanding production capacity. Beijing also ordered all state-funded data centers to stop using or purchasing foreign AI chips. It has offered to cut power bills by up to half for large data centres from firms like Tencent and Alibaba if they adopt local AI chips made by Huawei and Cambricon.

On the other hand, in a more open measure, China has also introduced the "K-visa" for scientists to attract foreign experts, directly contrasting with the tightening visa restrictions in the United States

Europe: Simplification

Europe, too, has been undergoing a strategic shift. For instance, it attempted to balance its landmark AI Act with the need to prevent economic stagnation and was also influenced by intense pressure from Donald Trump and Big Tech to simplify burdensome regulation.

It has for instance been scaling back its attack on Big Tech after years of aggressive regulation. It laid out a “digital package of simplification” aimed to streamline rules on AI and privacy. It has also been stripping protections from the GDPR, including simplifying its infamous cookie permission pop-ups, while choosing to relax or delay landmark AI rules in an effort to cut red tape.

Regulation Drives Innovation

On the other hand, Europe still does remain the continent that has the most respect for the privacy and safety of its citizens and we should absolutely be proud of that. Also, I am a firm believer in the fact that if done right, regulation can be a true driver of innovation. In fact, I completely disagree that there is always a choice to be made between legislation and innovation. It's a myth that it's impossible to implement new ideas when you are constrained by laws.

In fact, I wrote a piece about how constraints can stimulate innovation, with many examples ranging from the Indian concept of “Jugaad” (a mindset of resourceful problem-solving in the face of adversity) to the rapid advancement of AI in China, because they are so clever at working around the limitations set by the US when it comes to advanced chips.

It’s also far too simplistic to blame Europe’s struggle to stay competitive solely on its stricter regulation. There are bigger structural challenges we need to address. We must tackle the fragmentation of our capital markets and policymaking, invest in expanding local digital infrastructure, and double down on building balanced technological sovereignty.

Last, but not least, there are ways to disclose our treasure trove of European data in innovative ways that are perfectly safe, secure and compliant with the EU Data Act and the EU AI Act, for instance via data pod technology. The EU Data Act is also a cornerstone of Europe’s ambitious data strategy to create a unified market for data. Its commendable goal is to ensure that data from connected devices and services like smart cars, wearables, or IoT-powered industrial machines can move freely and fairly, so that companies over here can innovate in ecosystems by sharing their data, as my company Athumi helps enable.

A Time Of Realism

All in all, if there is one thread running through these 6 trends, it is this: the era of AI experimentation, hype, and “magic” is giving way to something far more demanding, and far more valuable. Now is the time to build real products and real services. Much of the mystique (and, at times, pure marketing language) of recent years is evaporating, and 2026 will mark a turn toward realism:

- AI will be treated as a business discipline, not a playground. Companies will increasingly understand that AI is not a fun side experiment, but a strategic capability that requires a clear, business-model–driven approach, supported by robust systems and high-quality data. AI is not a magic wand. It is a mirror that reflects the strengths and weaknesses of an organization.

- Sovereignty ambitions will become more grounded. We will find a healthier balance in our sovereignty efforts, acknowledging that absolute sovereignty is largely a utopia. Pragmatism will replace purity tests.

- From storytelling to stewardship. The focus will shift away from glossy narratives about autonomous and “agentic” AI toward the very real challenge of managing these systems. This means new types of platforms, protocols, standards, governance models, and even new kinds of browsers and interfaces.

- Collaboration will move from idealism to necessity. We’ll think more realistically about collaboration, especially around data, because the challenges we face are simply too large to solve alone. That’s not a weakness but rather a strength. More collaboration fosters alignment and mutual understanding, something we badly need in increasingly polarized times.

- A more mature view on regulation. Finally, we’ll become more measured and realistic about regulation. Yes, it should not stifle innovation, and yes, some rules could be simpler. But regulation is not inherently restrictive, despite what some claim. When designed well, it can be a powerful driver of growth and innovation.

Magical storytelling and radical visions of the future are fun, but they are not real (yet). I prefer building things, even if real (business) life is messy, complex, and hard. In the end, that friction is precisely what gives business value.